First of all, I failed high school. This was not a problem with intelligence, nor with schoolwork at all. It was merely because of problems at home, in a dysfunctional family fraught with distracting emotional turmoil and typical teenage anxiety on my part. This was mended later, after a long interruption.

Secondly, I failed in the US Navy. Although I always did my job well and was very bright and capable, I did not obey the primary law of the armed forces: no matter who is right -- I am wrong.

Having been removed from the Navy with an "honorable" but general discharge, I then set forth to mend the first problem. I took and passed tests which allowed me to enter college for a degree in engineering. But, sure enough, I eventually failed that as well.

Due to issues with living in Lubbock, Texas, problems with a failed marriage, and problems with a tornado that destroyed my job -- I just left for California without ever getting a degree. These are not so much excuses -- merely facts. I was to blame for most of those problems as well. I cannot be blamed for the tornado, however.

In the years that followed there were many other ups and downs, but there were great successes in my life, finally. I was able to learn about computers to a very great depth, including digital electronics, systems engineering and software engineering. This also occurred during a time of explosive growth in the use of computers for everything from space, military, business, art, music and robotics to medicine and home recipes, which was all very fortunate for my career.

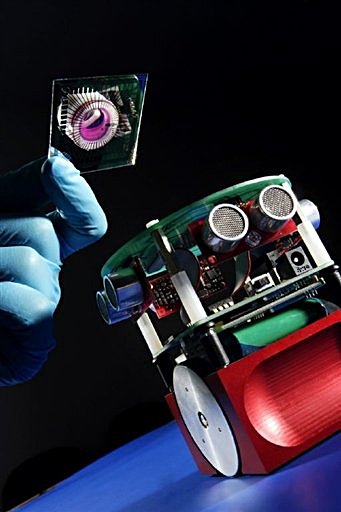

The one category, robotics, was my most favored, and the one in which I buried myself the furthest. Sadly, however, the USA was not so interested in robots in comparison to Japan or other countries. So this was not a sustainable career choice.

And therein lies my most dismal failure. I had intended to develop an "autonomous being", a partly robotic, partly computational "animal" -- and thought that certainly during my life that if I worked hard enough and studied all the sciences necessary, that I could surely accomplish this feat. It did not necessarily need to be human-like, but certainly most people would identify with such a "being" more than any other.

As time went on, and the number of disciplines I found necessary to study began to mount and the interruptions from the necessity in pursuit of a living, there were glimpses of the failure I would someday feel so sadly about. For one thing, I could not merely depend on knowing electronics, which is in itself a complexity which can consume one's mind entirely. The details for creating integrated circuitry, with the myriad molecular surface interactions, electron tunneling, metallurgical and chemical effects and so forth, involve entire fields of science unto themselves.

I could not depend on my knowledge of physics, which was also in depth, but certainly only a minute fraction of the amount I would need to know if I was to truly learn the secrets to creating an "autonomous being".

Even to the degree to which electronics and physics overlapped, at the junction between leptons and quarks of quantum mechanics, was a problem so difficult that even Einstein faced failure. And I am certainly no Einstein.

But despite those issues and many others regarding the sheer number of scientific disciplines I would need to master, there was the lack of understanding, generally, of what provides animals and especially humans with their psychological and physiological "computational brain" abilities at all. What gave them their autonomy, their consciousnesses and sensory faculties? It was possible to trace out neuronal pathways, nerve endings and all that, but was there also some "magical" substance that could not be generated mechanically or biochemically?

Great arguments along this line persist and they overlap many philosophies, sciences and religions. What constitutes life and bodies and minds? Is life something that can be "designed" by creatures as limited as ourselves? Or does it require supernatural Gods? Or is it only something that emerges from the muck -- completely unguided, completely by accident? I don't know, although it seems to be the "accidental" one.

Anyway I failed. I am old enough now to know I never will accomplish that lifelong goal. I shall never devise any such thing as an "autonomous being". And what is worse is that I may never even understand what such a thing really is. The complexity is just too great for my inadequate mind. Perhaps it is too great for any mind.

I did create many "self-organized" programs. They perhaps touch upon certain tiny pieces of something that could emerge as an "autonomous being", but certainly they were too simple in themselves to count. Maybe if I wrote a million more such programs, and let them fight it out in the cybernetic arena, just by accident, and perhaps only for a few milleseconds -- I might have provided for the existence of "autonomous beings." But that would not be a success. That would merely be an accident.

Epilogue:

I failed, yes. But then all of our existence as true autonomous beings could be merely an accident. We are a kind of failure of the universe. The universe -- just for a little while -- failed! It failed to exhibit the usual, normally expected, increasing disorder. It didn't "do entropy" correctly. Not for the last 3 or 4 billion years, at least.

If the universe failed in this, it means that something, far in the distant past, failed even more so. Because at some point in time, whether at the point of the "Big Bang" or in some other "Little Bangs", the universe was suddenly very orderly (so there is something from which disorder is being made). And by creating "autonomous beings" like ourselves, it made a puzzlingly profound order from the chaos.

But, don't you worry. We shall make up for this lack of entropy by manufacturing an extra amount. We always have.